What is Data Cleaning?

The Data Cleaning process identifies and corrects or removes errors and inconsistencies in the data. It is a crucial step in the data preprocessing phase in any Data Analysis.

The primary objective of Data Cleaning is to improve the data quality and make it more accurate for analysis.

Common Errors in Cleaning:

The following are the common errors that occur in the data.

They are:

- Missing values

- Outliers

- Duplicate Records

- Inconsistent Formatting

Missing Value:

To improve the data quality, imputing the missing value is an appropriate way. The analytics will encounter three possible types of missing data values. The possible missing values depend on whether there is a relationship with other data in the data set with missing values. The three types of missing values are “MCAR(Missing Completely at Random), MAR (Missing At Random), and MNAR (Missing not at Random).

In Missing Completely At Random, the analytic could not find any patterns and why the data column is missing. In Missing at Random, the data may be missing in a column, but it can be explained by the data in other columns. Moreover, the missing data can easily be predicted based on the complete observation of the data. Therefore in Missing at Random, the reason for the missing value in a particular column can be described by data in another column.

In Missing not at Random, the missing value is related to the value itself. Further, the missing values are not dependent on other variables in the particular data set. Therefore, Data Analysts employ many strategies to handle the missing data with the help of algorithms. The first common strategy is to delete the row with the missing value.

How to Deal With Missing Values:

Usually, the rows in which missing values in any of the cells will be get deleted. When more rows have missing values, then this method is not applicable. Instead, the analyst may impute the missing data based on the other columns present in the dataset or solely on the data information in the column. Else the analyst may use the regression or classification models to predict missing values.

Therefore Data Cleaning is identifying and addressing the issues in order to prepare the data for further analysis. It includes the combination of both manual and automated techniques. Though manual reviewing is a consistent process of reviewing and correcting data errors, it is a time-consuming one.

Outliers:

Outliers can be either a mistake or a variance. Therefore outliers are the data points that are far from the rest of the data points in an observation. They can be an error due to data entry or in measurements, or the data point is largely varied from the population. Whatever the reason, the analyst needs to identify and handle the outliers while cleaning data.

Since the outliers are the data points that are significantly different from the other data points in the data set, they should be addressed correctly. To do that there are various methods are employed in data cleaning. For example, data outliers could manipulate and mislead the training process resulting in longer training time for the data models. Outlier trimming is very much needed when there is more concern about data quality.

The Outliers can cause the following:

- Input error or Measurement error

- Data Corruption

True outlier data observation

The Outlier can be identified in the following ways:

- Univariate Method

- Multivariate Method

- Minkowski Error Method

The Outlier can be removed in the following methods:

- Testing the Data Set

- Using Standard Deviation Method

- Using Interquartile Deviation Method

- Boxplots

- Z-Score

And other automated tools

The Outlier can be handled in the following ways:

- Trimming or Removing the Outlier

- Flooring and Capping (Percentile Based)

- Mean or Median Imputation

Duplicate Records

Copy of any original record in a database is called a Duplicate record. Duplicate Records occur when the data is collected via different means or scrapped, or data is transferred from one system to another. The duplicate records can ruin the split between train, validation, and test sets. It may lead to a biased performance, resulting in an unsatisfactory production model. In addition, duplicate records may hurt the learning process greatly.

Therefore, Identifying and merging or removing these duplicate records from your existing database is more crucial. The process of removing duplicate records from the data set is called Data Deduplication. Data duplication may happen due to mergers and acquisitions, lack of data governance, poor data entry, and third-party data integration. Sometimes software bugs and system errors in applications can result in duplicate records.

There are three types of Duplication. They are:

- Visualizing Duplication

- Finding and Removing Exact Duplicates

- Finding and Removing Partial Duplicates

Data Deduplication:

Deduplication is nothing but the process of comparing, matching, and removing duplicate records from the data. There are three steps involving Data Deduplication, namely comparing and matching, handling obsolete records, and creating consolidated records.

Inconsistent Formatting:

Inconsistent Formatting leads to false conclusions. So the data set should be cleaned for high accuracy. It refers to the presence of data values inconsistent with the desired format, such as incorrect data format, inconsistent use of lower and upper characters, or inconsistent use of special characters.

These Inconsistent data make it challenging to analyze and utilize the data effectively. Besides, it may lead to errors during data processing. To address the issues such as inconsistent Formatting, Data cleaning techniques such as standardization, normalization, and data transformation can be applied to bring the data to a consistent format.

To deal with inconsistent Formatting, the analyst needs to inspect the data and determine the nature and extent of formatting inconstancies. Then, he needs to choose the correct format that he wants to apply to the data to make it consistent; that could be a date format, case format, or a special character format.

If the data set is extensive, he may utilize Excel macros or Python to automate the formatting process. Further, he needs to check the data after making changes to ensure that the Formatting is consistent and free from errors. In addition, the analyst needs to store the cleaned data in the new file or overwrite the original file to ensure that the cleaned data will be used in future analyses. In any case, the exact approach to inconsistent Formatting depends on the specific situation, the data set, and the desired output.

Cleaning inconsistent Formatting of the data is a critical step in the data preparation process. However, it has more significant benefits, such as improved data quality, accuracy, easy data analysis, better data visualization, better collaboration, and increased efficiency.

How to Clean Data?

The data analyst needs to identify the source of data and its quality. Before he starts cleaning the data, he needs to ascertain where it came from and what issues the data may contain. That step may help the data analyst prioritize his efforts and develop a plan to address any problems he finds.

He needs to inspect the data for errors and inconsistencies, such as missing values, outliers, duplicate records, and other issues that could affect the accuracy or usefulness of the data.

There are several options for handling any missing data, such as dropping rows or columns with missing values, imputing missing values with the help of statistical techniques, or leaving the missing values as it is. Therefore it is Data Analyst’s responsibility to decide how to handle missing values.

Further, he needs to address the errors and data inconsistency by scrutinizing the data. Once the analyst identifies any problem with the data, he has enough techniques to fix them. For example, it may involve manually reviewing and correcting the errors using algorithms to identify and fill in the missing values or using data transformation techniques to convert data into a more usable format.

After cleaning the data, it is important to validate it to ensure that it is accurate and consistent. This process may involve comparing the data to external sources, checking for patterns and trends, or performing statistical tests.

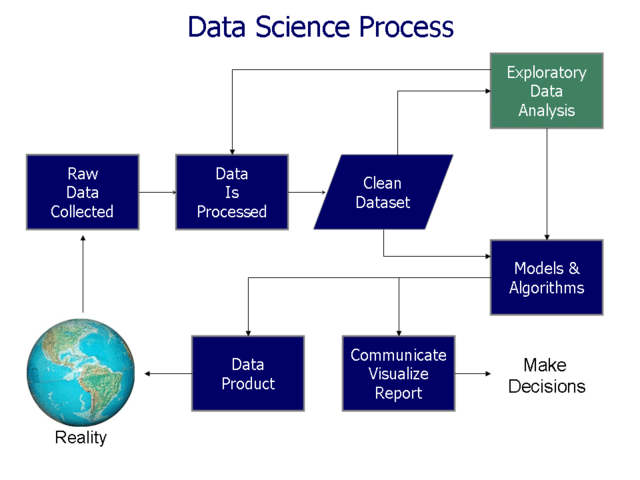

Process:

After cleaning the data, it is important to document the process and decisions made. This process will help the analyst to understand how the data is cleaned and will make it easier to replicate or update the process in the future.

Cleaning inconstant data formatting is a critical step in the data preparation process that significantly benefits data accuracy. However, the specific approach to Data Cleaning usually depends on the data set’s size and complexity, the problems’ nature, and the outcome.

Components of Quality Data:

Quality data is data that is accurate, complete, and consistent. It is free from errors and inconsistencies and is suitable for the intended use. Quality Data is essential for making accurate and reliable decisions. Ensuring the quality of your data is an ongoing process that involves identifying and addressing any issues that may arise.

Quality data has several components that are:

- Accuracy

- Completeness

- Consistency

- Relevance

- Timeliness

- Accessibility

- Validity

- Uniqueness

- Confidentiality

- Integrity

- Privacy

- Representation

- Interoperability

- Usability

- Context

Data Accuracy:

Data accuracy always refers to the degree to which the data reflects the true value. Accuracy is a measure of how close the data is to the actual, correct value. It is important because accurate data can lead to valid conclusions and precise decisions. To ensure data accuracy, it is necessary to validate the data and rectify any errors or inconsistencies that are found in. This can be achieved through a combination of manual and automated checks, as well as data cleansing and data normalization techniques.

Data Completeness:

Completeness refers to the extent to which data in a given dataset is complete or lacks any missing values or entries. Data completeness measures the proportion of required data that has been collected and recorded accurately in a database or spreadsheet. It is an important aspect of data quality and can impact the accuracy and reliability of results obtained from data analysis and decision-making processes.

Data Consistency:

Data consistency is the accuracy and consistency of data within a database or dataset, such that the values and data stored conform to the defined rules and constraints of the database schema. Further, it refers to the degree to which data values are uniform and conforms to standards, rules, or procedures. Ensuring data consistency helps to maintain the integrity and reliability of data and reduces errors and inconsistencies that can occur during data processing and analysis.

Data Relevance:

Data relevance refers to the degree to which a specific data element is valuable and applicable to a particular situation or decision-making process. It refers to the extent to which data is directly related to and supports the objectives and goals of the analysis or decision at hand. Relevant data is critical in making informed and effective decisions and helps ensure that the right information is being used to support the desired outcome. Irrelevant data can affect the decision-making process and lead to incorrect conclusions.

Timeliness:

Data timeliness refers to the degree to which data is available and updated in a timely manner to support decision-making processes. It refers to the age of the data and how up-to-date and current it is in relation to the decision or analysis at hand. Timeliness is vital in many industries and applications where real-time information is essential for making informed and accurate decisions. Data that is not timely can be outdated and less valuable, leading to incorrect conclusions and decisions. Timeliness requires effective data management processes and systems that can quickly and accurately update and provide access to the most current information.

Data Accessibility:

Data accessibility refers to the ease with which data can be retrieved and used for decision-making purposes. It is the ability of individuals and systems to locate and retrieve data in an efficient manner. Accessibility is a critical aspect of data management and can impact the ability to make informed decisions based on accurate and relevant data. Data that could not be accessible can result in delays and inefficiencies, leading to incorrect conclusions and decisions. Accessibility requires effective data management processes and systems and secure access controls to protect sensitive information.

Data Validity:

The whole process of checking data’s integrity, accuracy, and quality before it is used for commercial purposes. It also refers to the correctness of data that adheres to the defined standards, rules, and constraints of the data system or database. Data validity is one of the most crucial quality data measures. It is the degree to which data meets specified requirements and accurately represents the problem it is intended to represent.

It is a critical step in the analytics world to ensure a smooth data workflow. Data validity is an essential aspect of data quality and can impact the accuracy and reliability of results obtained from data analysis and decision-making processes. Further, it requires effective data management processes, data validation procedures, and error-checking mechanisms to detect and correct inaccuracies.

Data Uniqueness:

Data Uniqueness is the degree to which each data element in a data set is distinct and does not repeat. It is the most critical dimension for avoiding Duplication and overlaps. Uniqueness is measured for all records within the data set or across data sets. It is the property of data where each value in the data set is distinct, and it can be identified as a single and separate entity. In databases, a duplicate record can lead to confusion, errors, and incorrect conclusion; therefore, data uniqueness is crucial for many applications. Uniqueness requires effective data management processes and techniques to employ unique identifier keys to prevent duplicate records and ensure consistent data.

Data Confidentiality:

Data confidentiality refers to protecting sensitive data from unauthorized access and disclosure, including protecting personal privacy and proprietary information. It also refers to the steps taken to ensure that data is not disclosed to unauthorized parties and is kept secure and protected.

Any Lapses in data confidentiality can lead to a data breach. Data confidentiality is vital in many industries and applications, such as healthcare, finance, and government, where sensitive information data is available that needs to be protected to maintain privacy and security. Confidentiality requires effective security measures, such as encryption, access controls, and data protection policies, to prevent unauthorized access and ensure the safe and secure storage and handling of sensitive information.

Data Integrity:

Integrity is the consistency and accuracy of data stored in a database, computer system, or other storage media over its entire life cycle. It means maintaining and assuring the reliability and trustworthiness of data so that it can be used and processed without error and that it remains secure from unauthorized access or manipulation. This is accomplished through various methods such as data validation, error detection and correction, and data backup and recovery procedures.

Data Privacy:

Data privacy protects personal information and sensitive data from unauthorized access, use, disclosure, alteration, or destruction. Everyone can determine who can access their personal data. Further, Individuals can control how their personal information is collected, stored, used, and shared, and it is an essential aspect of data security.

Its objective is to ensure that individuals can have control over their personal information and to know how it is treated with respect and confidentiality. Data privacy is not Data Security. Governments across the globe have passed laws to regulate what kind of data can be collected about users. Sometimes Data Privacy is also referred to as Information privacy. The General Data Protection Regulation (GDPR) in the European Union and CCPA (California Consumer Privacy Act) in the United States are the best examples.

Data Representation:

Data representation is the method by which data is stored, organized, and represented in a computer system. It refers to the various formats and structures used to encode information so that it can be processed, transmitted, and stored by computer systems. Data representation can take many forms that include text, numbers, images, audio, and video.

In addition, it can be stored in various formats, such as ASCII text files, binary files, databases, and more. The choice of data representation depends on the type of data being stored and the purpose for which it will be used, and the requirements for processing and storage. Effective data representation is important for data processing and analysis accuracy, efficiency, and security.

Data Interoperability:

Data interoperability is the ability of different systems and services, applications, and data formats to work together and exchange data in a meaningful and effective manner. It allows data flow between different systems and platforms, authorizing the sharing of information and collaboration between organizations and supporting the integration of various technologies and services.

Interoperability is achieved by using common data formats, protocols, and APIs and implementing data standards and best practices for data exchange and integration. It is critical to support innovation and efficiency in the healthcare, finance, and technology industries. In addition, it empowers organizations to leverage the benefits of new technologies and data-driven approaches effectively.

Data Usability

Data usability ability of the user to drive useful information with ease, and data can be understood, used, and applied in practice. Usability is very crucial for Data-Driven Organizations. It is concerned with the design and presentation of data. It is essential for making data accessible and valuable to a wide range of users, including domain experts, analysts, and decision-makers. A data system is useful when it provides users with relevant and accurate information, is easy to navigate and understand, and supports effective data exploration and analysis.

Data usability aims to help users to work with data effectively and efficiently and to support the use of data to make informed decisions and solve problems. Factors that contribute to data usability include data quality, organization, visualization, and the availability of tools and resources for data analysis and interpretation.

Data Context:

Data context is the information and knowledge available with the data, such as the background, purpose, and relevance of the data. In other words, it is the source of all entities mapped over a database. It helps to provide context and meaning to the data. The users can understand the data and understand how it can be used. Data Context includes information about the data’s origin, creation date, data format, and accuracy.

It maintains an Identity Cache. Data context provides more information about the data’s proposed use and the context in which it was created. Understanding the data context is crucial for making informed decisions and using the data successfully. Data Context ensures the data is used correctly and not misinterpreted. It can be documented in metadata, data dictionaries, and other forms of documentation. It can be conveyed through data visualization, such as graphs and charts, that provide additional context and insights into the data.

Benefits of Data Cleaning:

Data cleaning improves accuracy. It identifies and corrects the inconsistencies and errors in the data. Once the data is cleaned and free from errors and inconsistencies, data is more reliable, trustworthy, and creditworthy. Cleaning streamlines the data analysis process and reduce the time to prepare the data for analysis. It improves the data quality and enhances the quality analysis and also decision-making process.

Data Cleaning Tools:

Data cleaning tools are used to remove or correct inaccuracies, inconsistencies, and irrelevant information in data sets. It makes the data more reliable, consistent, and useful for analysis and decision-making. In addition, these tools standardize and transform data into a common format, filling in missing values, removing duplicates, and fixing errors. It is crucial to obtain accurate results and insights from data analysis. This is because there are so many numbers of tools and techniques are used in data cleaning.

Data Visualization Tools:

The data analyst uses data visualization tools to visualize and explore the data set to identify errors and inconsistencies. Data visualization tools are application software that can represent data in a graphical or pictorial format. These tools allow users to convert complex and large data sets into interactive visual representations such as charts, graphs, maps, and dashboards.

The objective of data visualization is to make data easier to understand and communicate insights. It supports data-driven decision-making. Data visualization tools can help identify trends, patterns, and relationships in data that would be difficult to detect in raw data form. Some popular data visualization tools are Tableau, PowerBI, QlikView, and Matplotlib.

Most Popular Data Visualization Tools

- Tableau

- PowerBI

- QlikView

- Matplotlib

- D3.js

- ggplot2

- Highcharts

- Plotly

- Microsoft Excel

- Google Charts

Data Cleansing Software:

Data cleansing software is also known as data scrubbing software or Data cleaning software. They identify and correct errors, inaccuracies, inconsistencies, and other irrelevant information in data sets. It improves data quality by removing duplicates, fixing errors, and standardizing formats. Cleansing Software also fills in the missing values.

It uses algorithms to identify and correct errors and inconsistencies in data automatically. Data cleansing software provides options for handling missing values and duplicates

Popular Data Cleansing Software:

- Talend Data Quality

- Informatica Data Quality

- Microsoft SQL Server Integration Services (SSIS)

- Google Cloud Data Loss Prevention

- DataRobot

- Trifacta

- Alteryx Data Cleansing

- Talend MDM

- Dataddo

- DataWalk

Spreadsheets:

Spreadsheets are computer applications that help the user to organize, manipulate and analyze data in a grid format. They are used in record keeping, analysis, and financial budgeting. In spreadsheets, the data is organized in rows and columns. Formulas, functions, and pivot tables in spreadsheets can easily manipulate the data. The user can represent the data graphically as charts and graphs. Microsoft Excel is the most commonly used software. There are so many Spreadsheet software available online. Some of them are Google Sheets, Apple Numbers, LibreOffice Calc, and many more. Spreadsheets are widely used since they are easy to use, more flexible, and help users to perform complex calculations and analyses easily.

Popular Spread Sheets:

- Microsoft Excel

- Google Sheets

- Apple Numbers

- LibreOffice Calc

- WPS Office Spreadsheets

- Zoho Sheet

- Smartsheet

- Airtable

- Quip Spreadsheets

- EtherCalc

Data Transformation Tools:

Data transformation is a crucial step in the data integration process. These tools are applications. Transformation tools can automate the transformation process within minutes. It converts data from one format to another. It integrates data from multiple sources and makes it usable for analysis and reporting. Besides, data transformation tools can do data mapping, data normalization, data aggregation, and data enrichment.

Data Transformation Tools:

- Talend Data Integration

- Microsoft SQL Server Integration Services (SSIS)

- Apache NiFi

- Pentaho Data Integration

- Talend Open Studio

- Hevo

- StreamSets Data Collector

- Google Cloud Dataflow

- AWS Glue

- Alteryx Data Transformation Tool

Data Cleaning Scripts

Cleaning Scripts are computer programs written in a specific programming language to automate the process of data cleaning. They are used to correct inaccuracies, inconsistencies, and irrelevant information in data sets. These scripts can be written in various programming languages, such as Python, R, SQL, etc. These Scripts are used to remove duplicates, fill in missing values, correct data errors, standardize data formats, replace incorrect values, and remove irrelevant data.

It also helps to merge data from multiple sources and aggregate, normalize, and enrich data. Data cleaning scripts are useful when dealing with large and complex data sets, as they allow automation and repetition of the cleaning process. In addition, they can modify to accommodate changes in the data or in the cleaning requirements.

Scripts Employed in Data Cleaning:

Python: pandas library in Python is a popular tool for Data Cleaning. It provides functions to handle missing values and remove duplicates. In addition, it transforms data into a desired format.

R: The tidyr and dplyr packages in R are commonly used for data cleaning, offering functions for reshaping data, removing duplicates, and handling missing values.

SQL: Analysts can use SQL to clean data stored in databases. Common SQL operations remove duplicates, update incorrect values, and aggregate data in the data-cleaning process.

The Data cleaning scripts depend on the specific data and requirements. And it’s essential to understand the specific cleaning needs and select the scripts accordingly.

The Characteristics of Data Cleaning:

Data Cleaning is an iterative process. It is an ongoing process that identifies and addresses the issues as and when they arise. It means that the analyst needs to repeat certain steps or go back and review data multiple times. Data cleaning requires careful reviewing and correcting of data errors and inconsistencies. It needs awareness for a detailed and careful analysis.

Data cleaning needs decisions making skills to handle missing values, outliers, and other issues. These inconsistencies affect the accuracy and reliability of the data, so it is vital to consider the available options for effective cleaning. In addition, data cleaning is a time-consuming procedure for large or complex datasets. Therefore, it is essential to allow enough time and resources for cleaning that ensure your data is cleaned effectively.

Some Data cleaning tasks are automated, using application software or scripts, to save time and effort. However, it is important to review the results of automated cleaning to make the data more accurate and reliable. Therefore, Data Cleaning is an essential step in the data analysis process that requires careful review, analysis, and decision-making to produce accurate and reliable data.

Steps of Data Cleaning:

- Inspection

- Handling Missing Values

- Removing Duplicates

- Handling Outliers

- Data Transformations

- Data Normalization

- Data Validation

- Data Integration

The analysts need to understand where it came from and what issues it contains. This identification process can help him prioritize his efforts and develop a plan for addressing the problems. Next, he needs to inspect the data for errors and inconsistencies, such as missing values, duplicate records, outliers, and other unknown issues that could hamper the results. Next, the analyst needs to decide how to handle the missing values.

There are several options to deal with the missing data; that includes dropping a row or column with missing values, imputing the values using statistical techniques, or leaving the missing value as it is. Finally, he needs to address the errors and validate the data. Once he cleaned the data, he needed to document all his processes and decisions. This will help him to understand how he cleaned and processed the data, which will make it easier for him to replicate or update the process in the future.

Use of Data Cleaning in Data Mining

Data Cleaning plays a crucial role in Data Mining. It helps to prepare the data for analysis and modeling. Data quality is a critical factor in Data Mining. The results obtained from Data Mining algorithms rely purely on the quality of the input data. Poor quality data can result in inaccurate, misleading, or incomplete results, which can have severe consequences for decision-making.

That is why Data Cleaning is an essential step in the Data Mining process. One of the objectives of Data Cleaning is to improve the accuracy of the data. The errors such as typos, incorrect values, or missing data can lead to inaccuracies in the results obtained from Data Mining algorithms. Data Cleaning helps to correct these errors for more accurate results from Data Mining.

The other important objective of Data Cleaning is to reduce noise in the data. Data mining algorithms are designed to find patterns in data. But the, irrelevant or redundant data can interfere with the process. Data Cleaning helps remove unwanted data. It reduces the noise in the data and improves the performance of Data Mining algorithms. It also fetches better results if the algorithms focus on the critical patterns in the data.

Data Mining Vs. Data Cleaning:

Furthermore, data cleaning enhances the quality of data. The data cleaning ensures the data is consistent and formatted uniformly to make it easier to mine and analyze. In addition, data Cleaning identifies and handles missing values, which is critical in some Data Mining applications. Finally, It improves the quality of data, which leads to better results from Data Mining algorithms since the input data is of high quality.

Finally, Data Cleaning increases data consistency, which is essential for Data Mining. Data mining algorithms always rely on data from multiple sources. Cleaning ensures the data is consistent across these sources. Data cleaning ensures data is more consistent. Consistent data gives more accurate and reliable results from Data Mining. Data Cleaning is an essential step in the Data Mining process. It prepares the data for analysis and modeling. It helps to get more accurate results from Data Mining algorithms.

Data Cleaning vs. Data Scrubbing:

Data Cleaning and data scrubbing are often used interchangeably, which refers to the process of identifying and correcting errors and inconsistencies in data. However, data scrubbing is a more rigorous cleaning process that involves a more detailed review and analysis of the data.

Cleaning identifies and corrects or removes errors, inconsistencies, and duplicates in a dataset. The goal of data cleaning is to improve the quality of the data and make it more usable for analysis and decision-making.

On the other hand, data scrubbing is a more comprehensive process that goes beyond just cleaning. It transforms raw data into a structured format that is consistent, accurate, and usable. It can clean and correct errors, but it also includes activities such as standardizing data values and converting data into a consistent format. Besides, it transforms data into a more suitable structure for analysis. Data Cleaning can do a basic data review to identify and fix obvious errors and inconsistencies. On the other hand, data scrubbing does a more detailed review and analysis of the data, which includes checking for patterns and trends and identifying and correcting more subtle errors and inconsistencies.

Data Scrubbing Vs. Data Cleaning:

Data cleaning focuses on correcting inaccuracies and inconsistencies; data scrubbing encompasses a wider range of activities aimed at improving the overall quality and structure of the data. Both processes are important for ensuring the accuracy and reliability of data for use in decision-making and analysis.

Data Cleaning may involve a combination of automated and manual techniques, such as using algorithms to identify and fill in missing values or manually reviewing and correcting data errors. In addition, data scrubbing may involve using more advanced tools and techniques, such as data cleansing software or custom-written scripts.

Data Scrubbing and Data cleaning are related but distinct processes that are used to improve the quality and reliability of data.

Data Cleansing Vs. Data Cleaning

Cleansing and Cleaning are often used interchangeably. It refers to the process of identifying and correcting errors and inconsistencies in data. However, data cleansing may refer to a more comprehensive process that involves a thorough review and analysis of the data. It may include additional steps such as standardizing data formats and integrating data from multiple sources.

Data cleansing is also known as Data Purging or Data Hygiene. It refers to the process of removing or correcting any inaccurate, irrelevant, outdated, or duplicated data from a dataset. The objective of data cleansing is to improve the overall quality and integrity of the data by reducing errors and inconsistencies. It can be manual or automated. Data Cleansing often involves a combination of both. Though Data cleansing may be a time-consuming process, it is essential to ensure that the data being used is more accurate, relevant, and trustworthy.

Data Cleaning vs. Data Cleansing:

On the other hand, data cleaning is a broader term that encompasses data cleansing and other data preparation activities. Unlike data cleansing, which focuses on eliminating inaccurate data, data cleaning focuses on improving the overall quality and usability of the data. Data Cleaning is an essential step in the data science process. Further, it ensures that the data used for analysis is accurate and more reliable. Data science involves using statistical and computational techniques to analyze large quantities of data to extract insights and inform decision-making.

Data cleansing is a specific process that removes or corrects inaccurate data. At the same time, data cleaning is a broader process that encompasses data cleansing as well as other data preparation activities. Cleaning is aimed at improving the quality and usability of the data. Both processes are important for ensuring that the data being used is accurate and trustworthy and can be used for analysis and decision-making.

Data Cleaning vs. Data Transformation

As we already discussed, Data Cleaning is identifying and correcting errors, inconsistencies, and duplicates in a dataset. The goal of data cleaning is to improve the quality and reliability of the data.

Data Transformation, on the other hand, converts data from one format or structure to another to meet specific requirements or facilitate data analysis. It can include data cleaning and other activities such as standardizing data values, converting data into a consistent format, and transforming data into a suitable structure for analysis. Data transformation is an essential step in the data preparation process because it enables the data to be used effectively for analysis and decision-making.

Data transformation is converting the data into a suitable format for analysis and decision-making. Both processes are important for ensuring the quality and usability of the data for use in analysis and decision-making. Data cleaning is typically a pre-requisite for data transformation, and the two processes often go hand-in-hand as part of the data preparation process.

How to make Data-Driven Decisions with Data Cleaning

Data-driven decisions are based on available data and the analysis of data, not on intuition or experience. To make data-driven decisions, it is important to have high-quality data. That data should be more accurate, consistent, and usable. Data cleaning helps the analyst to get quality data.

Cleaning the data can improve the quality and reliability of the organizations’ data. Cleaning is essential for accurate data analysis. The cleaned data can be used for analysis and decision-making. Data analysis can reveal patterns and relationships in the data set. Cleaned can provide insights and give decisions.

The data-driven decisions are completely relay on high-quality, accurate, consistent, and usable data. Data cleaning is an essential step in the data preparation process. It helps organizations improve the quality of their data and make it usable for analysis and decision-making. By combining data cleaning with data analysis, organizations can make data-driven decisions that are based on facts rather than intuition or experience.

![Best SaaS Backup Solutions [Expert Reviewed + Security Analysis]](https://www.prodigitalweb.com/wp-content/uploads/2026/05/Best-SaaS-Backup-Solutions-145x100.png)