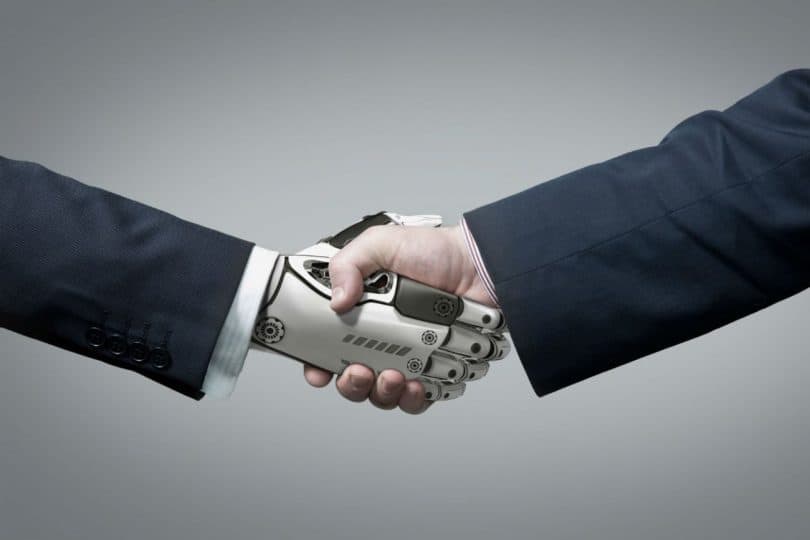

The so-called artificial intelligence (AI) in general, and cognitive computing (CC) in particular are called to produce another revolution in tools that serve to promote fields as diverse as science, medicine, city management, Manufacturing; Or provide autonomy to machines. In short, everyone’s life.

Consider, for example, RobERt (Robotic Exoplanet Recognition), for the analysis of possible planets that may have life; In disease prediction, such as a heart attack or stroke; The so-called “smart cities”; Or autonomous means of transport, such as cars or trains.

Although Artificial Intelligence and CC may seem synonymous, they are actually two concepts, which, although related, are a little different. Thus, the Harvard Business Review (HBR) in the article “Artificial Intelligence adds a new layer to cyber risk” last April, provided the following definitions:

“While the two technologies refer to the same process, […] the CC uses a set of technologies designed to increase the cognitive capacities of the human mind, a cognitive system can perceive and infer, reason and learn. In a broad sense what refers to computers that can perform tasks for which human intelligence would be required. “

That is, the CC would be a discipline contained in Artificial Intelligence.

AI systems, in general, can be trained to analyze and understand natural language, imitate human reasoning or make decisions.

CC systems are built in order to provide information for making decisions and to learn for themselves both collected data and/or data that are provided to them and their interaction with people.

To get a system or machine to learn a task or assimilate a series of knowledge, it is necessary to first provide a series of algorithms – consisting of statistical mathematical models – that serve to, and this is the second step, to treat the data or information with which are fed into the system and with which to start the learning process, and third, training sessions between experts, who ask questions and evaluate the machine’s responses, changing the results according to the evaluation received.

The algorithms are adapted depending on the data supplied and the interaction with humans or the environment.

The CC in particular – and therefore the Artificial Intelligence that encompasses it – as any technology [on Information], on the one hand, introduces new elements of insecurity, and, on the other, can be used to make systems more secure. If a technology is very powerful, it is so much to produce benefits as to produce damages, so that its control must be comparable.

New risks involved in CC

The HBR in the cited article above addresses this issue by focusing on what is intrinsic to the CC, which is that its algorithms are adjusted as it processes new data and interacts with the environment and humans both in stage Of establishment and construction of the actual system as later, when already in operation.

The continuous learning as the system interacts, which at first seems adequate since it was built for that, has its risks. Therefore, you have to continue to monitor very closely on what you continue to learn and what your results are.

In the first stage of Artificial Intelligence, that of their initial establishment and learning, people who have trained them, unconsciously or intentionally, can provide confusing or erroneous data, do not provide critical information to achieve the desired behavior, or train the system inappropriately.

The system already in operation is continually changing in its daily doing-by definition-and, if someone wanted to manipulate it, it would only have to interact with it in a way that could change its objectives or add others.

An example of this was the Twitter bot, “Tay,” designed to learn to communicate in natural language with young people, and quickly had to be removed. Malicious people (trolls) using the vulnerabilities of their learning algorithms, provided racist and sexist content, with a result that “Tay” began responding with inappropriate comments to millions of followers.

Just as CC is used for proper use, it can be used to the contrary.

Chatbots are systems that interact with people in natural language and can be used in automated customer service centers. Accuracy in their responses is very important, especially in sectors such as health and finance, which also have a large volume of confidential data, which are collected and processed for that purpose.

Such chatbots may also be used by cyber criminals to extend their fraudulent transactions, deceive people by impersonating other trusted individuals or institutions, steal personal data, and enter systems.

You have to be able to detect changes in the normal activity of networks and computers and detect new threats.

CC as a cybersecurity tool

To face a threat there is no choice but to use tools at least as powerful as the threat to face.

It seems that something is being done in this sense if we look at the results of a study that presents the HBR, which concludes that the Artificial Intelligence in what is being used most is in activities between computers and analysis of information produced Or interchanged between machines, the IT department being the most used, namely 44% of such use used in the detection of unauthorized intrusions to systems, ie in cyber-security.

Artificial Intelligence and the Machine learning techniques can provide better insight into the normal operation of systems, and unauthorized malicious code actions and patterns.

Thus, using such techniques, routine tasks such as the analysis of large volumes of data on system activity or security incidents, provide great precision in the identification of abnormal behavior as well as the detection of unauthorized access or Malicious code identification.

In addition, [cyber] criminals constantly transform their attacks to achieve their goals. Much of the malware circulating on the network is related to another known malware. Cognitive systems, by scanning thousands of malicious code objects, can find patterns of behavior that help identify future mutated viruses, and even understand how hackers exploit new approaches.

This is achieved by knowledge adjusted to the organization, since the cognitive system is learning with the information provided by all the elements that make up its computer systems – in the broad sense of corporate computing (IT) and operational computing which provide not only data, technologies employed, systems architecture, etc.-.

What for a company an amount of traffic for a network segment, or a number of requests to a server can pose a threat, for another can be normal data. It depends on the business and how technology is used.

With this knowledge, alarms will be considerably reduced by allowing more focused analysis and human action on those incidents that require it, threats can be stopped before they penetrate and propagate in systems, and in some cases, Put a patch automatically where it is required.

But cognitive systems do not have to be reduced to analyzing the data produced by the machines, stored in the logs, that is, structured data. The CC has other tools that can be used by systems to interpret unstructured data. By using both spoken and written natural language recognition, they can examine reports, alerts communicated on social networks, or comment on threats, conversations or seminars, as well as how – human – experts – in security to keep themselves permanently informed.

Finally, to emphasize the essential role played by humans in the CC. On the one hand, the learning of the machine not only involves the automatic intake of data – selected or provided the criteria for selection of the appropriate data by humans – but must be accompanied by expert training. On the other hand, cognitive systems offer reports, which will be studied by the right people in order to make decisions.

What Bloomberg comes to say in the article “AI can not replace the human touch in cyber-security” last April, which provides the example of Mastercard Inc., which uses AI systems to detect abnormal transactions, Cyber security professionals to assess the severity of the threat.

Conclusion: Wanna Cry

What would have happened if once the code of the NSA tool “EternalBlue” had been published the machines would have read their code and learned how it worked?

Perhaps its load had been deactivated, either because of the Artificial Intelligence the machine had recognized its codes as malicious, or because it had searched for a patch for the exploited vulnerability to launch the attacks.

According to The Hacker News, since “The Shadow Brokers” in early April leaked the vulnerability and code, this was exploited in several attacks during that month, and, as early as May, was used to ” Wanna Cry “.

In mid-April, Microsoft released patches that fixed the vulnerability of its SMB (Server Message Block) for all versions of the different operating systems that had it, including those that no longer support such as Windows Xp.

![Best SaaS Backup Solutions [Expert Reviewed + Security Analysis]](https://www.prodigitalweb.com/wp-content/uploads/2026/05/Best-SaaS-Backup-Solutions-145x100.png)