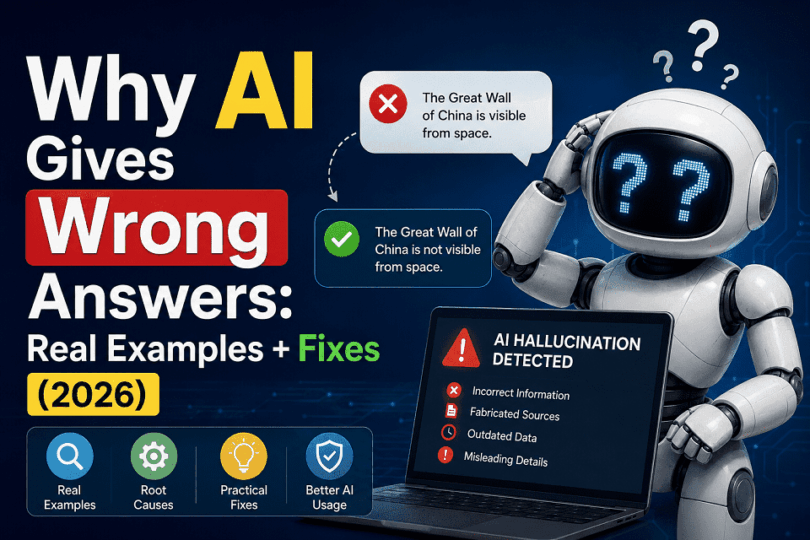

AI gives wrong answers due to limitations in training data, probabilistic predictions, a lack of real-world understanding, and hallucination effects. These errors can be reduced using better prompts, verification, and model improvements.

Introduction

AI gives wrong answers because it predicts responses using patterns instead of true understanding. Poor training data, hallucinations, weak context handling, and probabilistic prediction models can cause AI systems to generate false or misleading information. Users can reduce these errors by verifying outputs, using better prompts, and cross-checking reliable sources.

Artificial intelligence can write essays, answer questions, generate images, and even help developers write code. Tools like ChatGPT, Google Gemini, and Claude now support millions of users every day. But these systems still make surprising mistakes.

AI sometimes gives answers that sound correct but contain false information. It may invent facts, create fake references, misread context, or produce completely inaccurate explanations. Researchers often call this problem an “AI hallucination.”

This issue affects students, businesses, developers, researchers, and everyday users. A wrong AI answer can spread misinformation, create security risks, or damage trust in automated systems. The problem becomes more serious when people rely on AI without verification.

AI gives wrong answers for several reasons. Large language models predict patterns instead of understanding information like humans do. They depend heavily on training data, statistical probability, and prompt interpretation. Because of this, AI systems can generate confident responses even when the information is incomplete or incorrect.

In this guide, you will learn:

- Why AI gives wrong answers

- Real examples of AI hallucinations

- How these mistakes affect users

- Practical ways to reduce AI errors

You will also understand why modern AI still struggles with reasoning, accuracy, and factual reliability in 2026.

Key Takeaways

- AI predicts patterns instead of understanding facts

- Hallucinations can create fake citations and misinformation

- Better prompts and verification reduce AI mistakes

- RAG and alignment research improve reliability

- Human oversight remains essential

What Does It Mean When AI Gives Wrong Answers?

When AI gives a wrong answer, it produces information that is inaccurate, misleading, incomplete, or completely fabricated. In many cases, the response sounds confident and believable, which makes the mistake harder to detect.

This problem is commonly called an AI hallucination.

An AI hallucination happens when a model generates content that has no factual basis. The system may invent statistics, create fake citations, misidentify people, or describe events that never happened. The response often appears logical because modern AI models are trained to predict realistic language patterns.

For example, ChatGPT may generate a convincing explanation with incorrect historical facts. Google Gemini may summarize a topic but include false details or missing context. These systems do not “know” information as humans do. They predict the most probable next words based on patterns learned during training.

AI models can also:

- Misunderstand user intent

- Mix unrelated information

- Produce outdated answers

- Create non-existent references

These mistakes do not always happen because the AI lacks data. Sometimes the model misinterprets context or prioritizes language fluency over factual accuracy.

This issue affects nearly every major generative AI system, including Claude, Microsoft Copilot, and other large language models released in recent years.

Expert Insight:

“Large language models optimize for probable language generation, not guaranteed factual truth.”

Understanding AI hallucination is important because many users assume AI systems always provide reliable information. In reality, AI can sound highly confident while being completely wrong.

Why AI Gives Wrong Answers (Core Reasons)

AI systems can generate impressive responses, but they still make serious mistakes. These errors happen because modern AI models do not think or understand information like humans do. They rely on statistical prediction, training patterns, and probability.

Large language models such as ChatGPT and Google Gemini process enormous amounts of text data during training. They learn relationships between words, sentences, and patterns. Then they predict the most likely response to a user prompt.

This approach works well for many tasks, but it also creates important limitations.

AI can:

- Generate false information confidently

- Misunderstand context

- Mix accurate and inaccurate facts

- Fail with complex reasoning

The problem becomes more visible when users ask highly technical, ambiguous, or real-time questions. In these situations, the model may prioritize fluent language instead of factual correctness.

Another major issue involves training data quality. AI systems learn from large datasets collected from books, websites, articles, forums, and public content. If the data contains errors, bias, outdated information, or contradictions, the model can reproduce those problems in its answers.

AI also lacks genuine human understanding. It does not possess awareness, logic, emotions, beliefs, or real-world experience. The system predicts patterns mathematically rather than reasoning deeply about truth or accuracy.

These limitations create what researchers call AI hallucinations. The model generates content that appears realistic but contains fabricated or misleading information.

To understand this problem clearly, we must examine the core reasons behind AI errors and hallucinations.

1. Probabilistic Nature of AI

One of the biggest reasons AI gives wrong answers is simple: AI predicts responses instead of truly understanding information.

Large language models like ChatGPT and Google Gemini work using probability. They analyze patterns from massive datasets and predict the most likely next word in a sentence.

This process helps AI generate natural and fluent text. But it also creates a major weakness.

AI does not “know” facts the way humans do.

Instead, it calculates which response statistically fits the prompt based on patterns seen during training. The model may produce an answer that sounds correct even when the information is false.

For example, if many similar text patterns appear online, the AI may combine them into a believable but inaccurate response. This can lead to:

- Fake references

- Incorrect statistics

- Invented explanations

- Misleading summaries

The system focuses on language prediction, not truth verification.

This is why AI can confidently answer a question even when it lacks reliable information. The model attempts to complete the pattern rather than admit uncertainty.

Humans use reasoning, experience, and contextual understanding to judge accuracy. AI models mainly rely on mathematical probabilities and learned relationships between words.

This probabilistic design explains why modern AI systems sometimes produce highly convincing misinformation. The response may look intelligent, but the model is fundamentally predicting patterns rather than understanding reality.

2. Poor or Biased Training Data

AI models are only as reliable as the data used to train them. If the training data contains errors, bias, outdated information, or misinformation, the AI can reproduce those problems in its answers.

This is often described as:

“Garbage in, garbage out.”

Large AI systems such as ChatGPT and Google Gemini learn from enormous datasets collected from websites, books, forums, articles, code repositories, and public online content.

The internet contains valuable information, but it also includes:

- False claims

- Biased opinions

- Outdated facts

- Low-quality content

AI models cannot perfectly separate reliable information from inaccurate material during training. As a result, the system may absorb misleading patterns and repeat them later.

For example, if incorrect information appears repeatedly across many websites, the AI may treat it as statistically important. The model can then generate answers that sound authoritative but contain factual mistakes.

Bias in training data creates another major problem.

If datasets over represent certain viewpoints, regions, languages, or social perspectives, AI responses may become unbalanced or skewed. This issue affects areas such as:

- Hiring recommendations

- Medical information

- Political content

- Cultural representation

Training data also becomes outdated quickly. AI models trained on older datasets may provide obsolete statistics, expired regulations, or inaccurate technology information.

This limitation explains why AI sometimes struggles with current events, recent discoveries, or rapidly changing industries.

Even advanced AI systems cannot consistently produce reliable answers if the underlying training data contains significant flaws. The model learns patterns from data, so poor-quality input directly affects output quality.

3. Lack of Real-World Understanding

AI systems can process language quickly, but they still lack a genuine understanding of the real world. They do not think, reason, or experience reality as humans do.

This limitation is one of the main reasons AI gives incorrect answers.

Models such as ChatGPT and Google Gemini analyze patterns in text data. They learn how words and concepts commonly appear together. But they do not truly understand the meaning in a human sense.

For example, humans use:

- Common sense

- Lived experience

- Physical understanding

- Emotional context

AI models do not possess these abilities.

A human understands that ice melts in heat because of real-world experience. AI only recognizes statistical relationships between the words “ice” and “melting.” The model lacks physical awareness of temperature, matter, or sensory experience.

This difference creates serious reasoning limitations.

AI can sometimes:

- Misunderstand cause and effect

- Fail at logical consistency

- Confuse context

- Produce contradictory answers

The problem becomes more visible in complex discussions. Questions involving ethics, sarcasm, human emotion, or nuanced judgment often expose AI weaknesses.

For instance, an AI model may generate technically fluent explanations while missing obvious logical problems. It can also combine unrelated concepts into a response that sounds intelligent but lacks factual coherence.

This issue explains why AI occasionally fails at tasks humans consider simple. The system processes patterns mathematically rather than understanding reality conceptually.

Even the most advanced large language models still operate without true consciousness, awareness, or human-style reasoning. They simulate understanding through pattern prediction, which can create convincing but inaccurate answers.

4. Context Misinterpretation

AI models often struggle to maintain context during complex conversations or long prompts. This problem can cause the system to misunderstand instructions, lose important details, or generate inconsistent answers.

The issue becomes more noticeable when users provide:

- Multiple questions at once

- Lengthy technical prompts

- Unclear instructions

- Mixed topics in one conversation

Models such as ChatGPT and Google Gemini process information using token-based context windows. They attempt to track relationships between words, sentences, and instructions across a conversation.

But AI does not truly “remember” information like humans do.

As prompts become longer, the model may:

- Overlook critical details

- Prioritize less important context

- Confuse earlier instructions

- Mix unrelated information together

For example, a developer may ask AI to debug code while also requesting optimization suggestions and security improvements. The model might focus heavily on one task while ignoring another important instruction.

Long prompts can also increase hallucination risks. When context becomes complicated, the AI may attempt to fill in missing gaps with predicted information instead of acknowledging uncertainty.

This limitation affects many real-world use cases, including:

- Coding assistance

- Legal document analysis

- Academic research

- Technical troubleshooting

Context misinterpretation explains why AI sometimes gives answers that appear partially correct but contain subtle errors. The response may follow part of the prompt accurately while misunderstanding the broader intent.

Humans naturally use reasoning and situational awareness to maintain context during conversations. AI systems mainly rely on statistical relationships inside a limited context window, which makes complex instruction handling much harder.

5. Hallucination Phenomenon

AI hallucination is one of the most serious problems in modern artificial intelligence. It happens when an AI system generates information that sounds accurate but is actually false, fabricated, or misleading.

The most dangerous part is confidence.

Models such as ChatGPT and Google Gemini can produce highly convincing answers even when the information is completely wrong.

AI hallucinations may include:

- Fake citations

- Invented research papers

- Incorrect historical facts

- Fabricated statistics

In many cases, the response looks professional and well-structured. This makes hallucinations difficult for users to detect quickly.

The problem occurs because AI models try to generate the most probable continuation of text. When reliable information is missing or unclear, the system may “fill the gaps” with generated content instead of admitting uncertainty.

For example, an AI chatbot may invent:

- A non-existent scientific study

- A fake legal case

- Incorrect software commands

- Fictional expert quotes

The model does not intentionally deceive users. It predicts language patterns mathematically and sometimes produces fabricated content that statistically appears plausible.

Hallucinations become especially risky in fields that require high accuracy, including:

- Medicine

- Cybersecurity

- Law

- Finance

- Scientific research

A fabricated answer in these areas can create serious consequences.

This issue also explains why AI-generated misinformation spreads easily online. Many users trust fluent and confident responses without verifying sources or checking factual accuracy.

Researchers continue improving hallucination reduction techniques, but the problem still affects nearly every major generative AI system in 2026. Even advanced models can generate persuasive answers that contain hidden inaccuracies.

Where Does AI Get Its Information From?

Many people assume AI systems “know” information like humans do. In reality, AI models learn from enormous amounts of training data collected from many different sources.

Tools such as ChatGPT and Google Gemini generate responses by learning patterns from text, code, images, and other digital content.

This training process explains both the strengths and weaknesses of modern AI systems.

AI Learns From Massive Training Data

Modern AI systems learn from enormous collections of digital information called training datasets. These datasets contain billions of words gathered from many different sources across the internet and other digital archives.

Large language models such as ChatGPT and Google Gemini are trained using data that may include books, news articles, websites, scientific papers, online discussions, educational material, and programming code.

The goal of this training process is to teach the AI how human language works.

During training, the model analyzes patterns between words, sentences, and concepts. It learns how people ask questions, explain ideas, write code, discuss science, and communicate information across different contexts.

For example, if the model repeatedly sees phrases connecting “gravity” with “force,” “mass,” and “motion,” it gradually learns statistical relationships between those concepts.

The training data may come from:

- Public internet content

- Licensed datasets

- Books and journals

- Educational resources

- Code repositories

- Discussion forums

Some datasets also include multilingual content, technical documentation, and structured information designed to improve reasoning and language understanding.

However, the internet contains both accurate and inaccurate information. Training data may include outdated facts, misinformation, bias, low-quality content, and contradictory opinions. Because of this, AI models can sometimes reproduce errors that already exist in the source material.

Another important point is that AI does not memorize information the same way humans memorize facts. Instead, the system learns statistical patterns and relationships from enormous amounts of text.

This pattern-learning approach allows AI to:

- Generate human-like responses

- Summarize information

- Answer questions

- Translate languages

- Produce computer code

But it also explains why hallucinations happen. The AI predicts likely responses based on patterns rather than independently verifying factual truth.

AI Does Not “Know” Information Like Humans

One of the biggest misconceptions about artificial intelligence is the belief that AI systems truly “understand” information. In reality, modern AI models do not think, reason, or experience the world as humans do.

Humans learn through:

- Physical experience

- Observation

- Emotional understanding

- Social interaction

- Logical reasoning

AI systems learn through mathematical pattern analysis.

Large language models process enormous amounts of text and identify relationships between words, phrases, and concepts. The system predicts which words are most likely to appear together based on previous training examples.

This process creates the illusion of understanding.

For example, an AI chatbot may generate a detailed explanation about oceans, fire, emotions, or human relationships. But the model has never physically experienced water, heat, fear, pain, or social interaction.

The AI only recognizes language patterns connected to those topics.

This distinction is extremely important.

Humans understand concepts through awareness and real-world context. AI systems simulate understanding through probability calculations.

Because AI lacks consciousness and common sense, it can:

- Misunderstand subtle context

- Fail logical reasoning tasks

- Invent information confidently

- Produce contradictory explanations

For instance, a human can recognize when a statement sounds unrealistic or dangerous based on practical experience. AI systems often cannot evaluate information that way.

This limitation explains why even advanced models such as ChatGPT and Google Gemini can generate highly fluent but factually incorrect answers.

The system predicts language patterns effectively, but prediction is not the same as genuine comprehension.

Some AI Systems Use Real-Time Retrieval

Earlier AI systems depended almost entirely on static training data collected during model development. This created a major limitation because the world changes constantly.

New:

- scientific discoveries

- cybersecurity threats

- laws and regulations

- political events

- technology updates

appear every day.

If an AI model only relies on older training data, it may provide outdated or incomplete information.

To solve this problem, many modern AI systems now use retrieval technologies alongside language generation models. One of the most important approaches is called Retrieval-Augmented Generation, commonly known as RAG.

RAG systems work differently from traditional AI models.

Instead of generating answers only from stored training patterns, the AI first retrieves relevant information from external sources before creating a response. These sources may include:

- search engines

- enterprise databases

- research repositories

- documentation systems

- live knowledge platforms

This process improves factual grounding and allows the AI to access more recent information.

For example, an enterprise AI assistant may retrieve the latest company documentation before answering employee questions. A research-focused AI system may search updated scientific databases before generating summaries.

Real-time retrieval offers several important advantages. It can:

- improve accuracy

- reduce hallucinations

- access updated knowledge

- Provide more relevant answers

However, retrieval systems still have limitations.

The AI may retrieve correct information but interpret it incorrectly. It may also combine multiple sources poorly, misunderstand context, or generate misleading summaries from partially accurate data.

Even search-connected AI systems can therefore produce hallucinations.

This is why retrieval technology improves AI reliability but does not completely eliminate factual errors. Human verification still remains essential when using AI systems for professional, academic, legal, or technical work.

Inaccurate Content Vs Biased Content Comparison Table

| Aspect | Inaccurate Content | Biased Content |

| Definition | Information that is factually incorrect or misleading | Information that unfairly favors one viewpoint or perspective |

| Main Cause | Errors, hallucinations, outdated data, or weak verification | Skewed datasets, social bias, cultural imbalance, or selective training data |

| AI Behavior | Generates false facts or fabricated details | Produces one-sided or unfair responses |

| Example | AI invents a non-existent research paper | AI favors one political or social viewpoint repeatedly |

| Accuracy Level | Factually wrong | May contain facts, but present them unfairly |

| Risk to Users | Spreads misinformation and confusion | Reinforces stereotypes and distorted perspectives |

| Common Areas Affected | Science, medicine, coding, history | Politics, hiring, culture, and social topics |

| Detection Difficulty | Easier to verify through fact-checking | Harder to detect because bias can be subtle |

| Impact on Decision-Making | Leads to incorrect conclusions or actions | Influences opinions and judgment unfairly |

| Possible Solution | Better verification and retrieval systems | Better dataset diversity and fairness controls |

How to Know if an AI Answer Is Hallucinated

AI hallucinations can look surprisingly convincing. The response may sound professional, detailed, and confident even when the information is completely incorrect.

Because of this, users should learn how to recognize warning signs of hallucinated AI content.

The AI Gives Confident Answers Without Evidence

One common warning sign is excessive confidence without supporting proof.

AI systems such as ChatGPT and Google Gemini may present information as factual even when no reliable evidence exists.

If the response contains:

- strong claims

- precise statistics

- technical explanations

But provides no trustworthy sources, verification becomes important.

Confident wording does not guarantee accuracy.

Citations or Links Look Suspicious

Hallucinated AI answers often include:

- fake references

- broken links

- non-existent research papers

- fabricated expert quotes

For example, an AI chatbot may generate a citation that appears academically correct but leads to no real publication.

Users should verify:

- author names

- article titles

- journal references

- website links

through independent searches or official databases.

The Information Contradicts Trusted Sources

Another major warning sign appears when AI-generated information conflicts with reliable sources.

If an AI answer differs significantly from:

- official documentation

- scientific consensus

- government websites

- trusted experts

The response may contain hallucinations or outdated information.

Cross-checking important claims helps identify inaccuracies quickly.

The Response Contains Logical Inconsistencies

Hallucinated AI content sometimes becomes internally inconsistent.

For example, the AI may:

- contradict earlier statements

- mix unrelated facts

- produce impossible timelines

- change explanations midway

These logical inconsistencies often appear in:

- long conversations

- technical explanations

- historical discussions

- coding outputs

Carefully reviewing the response structure can expose hidden errors.

The AI Avoids Admitting Uncertainty

Humans often admit when they do not know something. AI systems may behave differently.

Some models generate fabricated answers instead of saying:

- “I am unsure”

- “I do not have enough information”

- “This information may be outdated”

This happens because AI models optimize for fluent responses and conversational continuity.

A chatbot that answers every question with extreme certainty may therefore produce more hallucinations.

The Answer Sounds Overly Generic or Artificial

Hallucinated responses sometimes contain vague or repetitive language that sounds informative but lacks meaningful detail.

For example, the AI may:

- avoid precise explanations

- Repeat broad statements

- provide generic summaries

- Use filler language instead of evidence

This pattern often appears when the model lacks strong factual grounding.

Technical or Mathematical Results Cannot Be Verified

AI systems can also hallucinate:

- code functions

- software commands

- equations

- calculations

- configuration steps

Developers and technical users should always test AI-generated outputs instead of assuming they are correct.

Even advanced AI systems can generate technically plausible but incorrect instructions.

The safest approach is simple: treat AI as a helpful assistant, not an unquestionable authority. Verification, critical thinking, and trusted sources remain essential when evaluating AI-generated information.

Real Examples of AI Giving Wrong Answers

AI hallucinations are not theoretical problems anymore. Real users, businesses, researchers, and developers encounter incorrect AI outputs every day. Some mistakes are harmless, but others can create serious consequences.

The following examples show how modern AI systems can produce confident but inaccurate information.

1. Fake Citations and Non-Existent Research Papers

One of the most common AI mistakes involves fabricated references.

Users often ask AI chatbots to generate academic sources, journal citations, or supporting evidence. In some cases, the AI creates references that look authentic but do not actually exist.

For example, ChatGPT has generated:

- fake author names

- non-existent journal articles

- incorrect publication dates

- fabricated DOI numbers

The citations often appear highly convincing because the formatting resembles real academic writing.

This issue became widely discussed after lawyers reportedly submitted AI-generated legal citations containing fictional court cases. The fabricated references created major credibility and legal concerns.

AI models generate these errors because they predict what a realistic citation should look like instead of verifying whether the source truly exists.

2. Incorrect Medical Advice

AI systems can also provide dangerous medical misinformation.

Some users ask AI chatbots for:

- symptom analysis

- medication guidance

- treatment suggestions

- mental health advice

Although AI can summarize medical information well, it sometimes generates incorrect or unsafe recommendations.

For example, an AI model may:

- misunderstand symptoms

- Suggest the wrong medication

- Ignore dangerous drug interactions

- provide outdated health information

This problem becomes serious when users trust AI responses without consulting medical professionals.

Models such as Google Gemini and Microsoft Copilot include safety systems to reduce harmful outputs, but hallucinations and factual mistakes still occur.

Medical AI errors highlight an important limitation: fluent language does not guarantee accurate healthcare advice.

3. Wrong Coding Outputs and Security Risks

Developers increasingly use AI tools for programming assistance, debugging, and code generation. But AI-generated code can contain hidden errors, inefficient logic, or security vulnerabilities.

For example, AI coding assistants may:

- generate insecure authentication code

- Recommend outdated libraries

- introduce memory leaks

- produce broken syntax

A generated script may appear correct at first glance but fail during execution.

In cybersecurity, these mistakes become even more dangerous. An AI model could accidentally generate vulnerable code patterns that expose systems to attacks.

Tools like GitHub Copilot can improve developer productivity, but programmers still need manual review and testing.

AI can assist coding, but it cannot replace human verification and secure development practices.

4. Fabricated Historical Facts

AI models sometimes invent historical details that never happened.

For example, a chatbot may:

- create fictional events

- misidentify historical figures

- invent speeches or quotations

- Combine unrelated timelines

Because AI prioritizes natural language flow, the answer may sound highly authoritative even when the facts are incorrect.

A user asking about a historical war or political event may receive:

- inaccurate dates

- incorrect locations

- false explanations

- fictional participants

These mistakes become difficult to detect when users lack background knowledge on the topic.

Unlike search engines, generative AI systems do not always retrieve verified historical records directly. Instead, they generate responses from learned language patterns, which increases the risk of factual distortion.

5. AI Generating False News Summaries

AI tools can summarize articles quickly, but they sometimes distort important information.

In several cases, AI-generated news summaries have:

- changed the meaning of headlines

- omitted critical context

- mixed unrelated stories

- introduced factual inaccuracies

This problem becomes dangerous during:

- elections

- financial reporting

- breaking news events

- geopolitical conflicts

A small factual error in a generated summary can spread misinformation rapidly across social media and online platforms.

AI models struggle especially with fast-changing information because training data may already be outdated.

6. Incorrect Mathematical and Logical Answers

AI models can also fail at basic reasoning tasks.

Even advanced systems sometimes:

- miscalculate equations

- skip logical steps

- contradict earlier answers

- produce impossible conclusions

For example, an AI chatbot may confidently provide an incorrect calculation while explaining the process fluently.

The issue happens because language models predict patterns in text rather than performing true symbolic reasoning like a dedicated calculator or mathematical engine.

This limitation explains why AI occasionally struggles with:

- multi-step logic

- advanced mathematics

- precise analytical reasoning

- complex technical calculations

These real-world examples show a critical reality about modern AI systems: strong language generation does not guarantee factual accuracy. AI can sound intelligent and persuasive while still producing false or misleading information.

Why AI Hallucinations Are Dangerous

AI hallucinations are more than technical mistakes. They can create real-world harm when users trust inaccurate information without verification.

Modern AI systems generate responses quickly and confidently. Because the answers sound natural and professional, many users assume the information is reliable. This false confidence makes hallucinations especially dangerous.

The risks affect individuals, businesses, researchers, governments, and online platforms.

Misinformation and Public Confusion

AI hallucinations can spread false information on a massive scale.

A chatbot may generate:

- inaccurate news summaries

- fake scientific claims

- Incorrect educational content

- misleading social media posts

Many users do not verify AI-generated information before sharing it online. As a result, misinformation can spread rapidly across websites, forums, and social platforms.

This issue becomes more serious during:

- elections

- health emergencies

- political conflicts

- breaking news events

For example, a fabricated AI-generated claim about medicine or public safety could influence thousands of people within hours.

Models such as ChatGPT and Google Gemini continue improving factual accuracy, but hallucinations still occur under complex or ambiguous prompts.

The combination of speed, confidence, and automation increases misinformation risks significantly.

Business and Financial Risks

AI hallucinations can also damage businesses and professional workflows.

Many organizations now use AI for:

- customer support

- research summaries

- report generation

- software development

If the AI produces incorrect information, the consequences may include:

- financial losses

- legal problems

- operational failures

- reputational damage

For example, an AI-generated business report may contain false statistics or fabricated market analysis. A company relying on inaccurate data could make poor strategic decisions.

Hallucinations also create risks in automated customer service systems. An AI assistant may provide incorrect policy information, inaccurate pricing, or misleading technical guidance.

In regulated industries such as finance, healthcare, and law, even small factual mistakes can create serious liability concerns.

Businesses, therefore, cannot rely on AI outputs without human review and verification.

Cybersecurity and Security Implications

AI hallucinations also introduce important cybersecurity risks.

Developers increasingly use AI tools for coding, automation, and infrastructure management. But AI-generated outputs can contain hidden vulnerabilities or dangerous recommendations.

For example, an AI model may:

- generate insecure code

- Recommend unsafe configurations

- produce flawed authentication logic

- Suggest vulnerable software libraries

These errors can expose systems to cyberattacks.

In cybersecurity operations, inaccurate AI-generated analysis may also:

- misclassify threats

- overlook malicious activity

- create false alerts

- provide incorrect remediation steps

This becomes especially dangerous when organizations automate security decisions using AI-assisted systems.

Attackers can also exploit hallucinations directly. Some malicious users intentionally manipulate AI prompts to generate misleading outputs, bypass safeguards, or spread disinformation.

As AI adoption grows across industries, hallucination risks become both a technical and a security challenge.

The core problem remains the same: AI systems can produce highly convincing information without guaranteeing factual correctness.

How to Fix AI Wrong Answers (Practical Solutions)

AI hallucinations cannot be eliminated completely in 2026, but users and developers can reduce them significantly. The key is understanding that AI outputs require verification, context control, and better system design.

Both human behavior and technical improvements play an important role in reducing AI mistakes.

For Users

Everyday users can reduce AI errors by interacting with AI systems more carefully and critically.

Use Precise Prompts

Vague prompts often produce vague or inaccurate answers.

AI models such as ChatGPT and Google Gemini perform better when instructions are clear and specific.

For example, instead of asking:

“Explain cybersecurity”

A better prompt would be:

“Explain ransomware attacks in simple terms with real-world examples from 2025.”

Specific prompts improve context accuracy and reduce hallucination risks.

Users should also:

- Define the task clearly

- mention desired output format

- provide enough context

- avoid combining unrelated questions

Better prompts usually produce more reliable answers.

Cross-Check Important Information

AI should not be treated as a perfect source of truth.

Users should verify:

- medical advice

- legal information

- financial guidance

- academic references

Cross-checking with trusted websites, official documentation, research papers, or professional experts helps detect hallucinations quickly.

This step becomes critical when AI-generated information affects real-world decisions.

Even advanced AI systems can produce confident but incorrect responses. Verification remains essential.

Ask for Sources and Evidence

Users should ask AI systems to provide:

- references

- citations

- supporting evidence

- official sources

This approach helps users evaluate whether the information appears credible.

However, AI-generated citations can sometimes be fabricated. Users must still verify whether the sources actually exist.

Asking for evidence encourages more transparent and traceable AI outputs, especially during research or technical work.

For Developers

Developers and AI researchers continue building techniques to reduce hallucinations and improve factual accuracy.

Fine-Tuning AI Models

Fine-tuning allows developers to train AI systems on specialized and higher-quality datasets.

This process helps the model:

- follow instructions better

- improve domain accuracy

- reduce irrelevant outputs

- align with specific tasks

For example, a medical AI assistant trained on verified healthcare datasets will usually perform better than a general-purpose chatbot.

Fine-tuning improves reliability, but it still cannot guarantee perfect accuracy.

Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation, often called RAG, is one of the most important hallucination-reduction techniques today.

Instead of relying only on training data, RAG systems retrieve information from external sources in real time before generating an answer.

This approach allows AI systems to:

- access updated information

- cite relevant documents

- improve factual grounding

- Reduce fabricated responses

Many modern enterprise AI systems now use RAG frameworks to improve reliability in:

- customer support

- research tools

- technical documentation

- business knowledge systems

RAG does not fully eliminate hallucinations, but it significantly improves factual consistency.

Using Better Training Datasets

High-quality training data remains one of the most important factors in AI reliability.

Developers improve model accuracy by:

- removing low-quality content

- filtering misinformation

- reducing bias

- updating outdated datasets

Cleaner datasets help AI models learn more reliable language patterns and factual relationships.

This process also improves fairness, consistency, and contextual understanding across AI systems.

Better data alone cannot solve every hallucination problem, but poor data almost always increases error rates.

Reducing AI hallucinations requires both technical improvements and responsible user behavior. AI systems continue improving rapidly, but human verification still remains essential for accuracy and trust.

Best Ways to Avoid AI Wrong Answers

AI systems can save time, improve productivity, and simplify research. But users should never assume that every AI-generated response is accurate. The safest approach is learning how to use AI critically and responsibly.

The following practices can help reduce the risk of hallucinations, misinformation, and misleading outputs.

Verify Important Information

Users should always verify important AI-generated information before trusting or sharing it.

This is especially important for:

- medical advice

- legal information

- financial guidance

- cybersecurity recommendations

- academic research

AI systems such as ChatGPT and Google Gemini can produce confident responses that still contain factual errors.

Cross-checking information with:

- official websites

- trusted publications

- scientific papers

- expert sources

helps identify hallucinations and misinformation quickly.

Blind trust in AI outputs can create serious problems, especially in professional or high-risk situations.

Use Better Prompts

Prompt quality has a major impact on AI accuracy.

Vague prompts often generate vague or misleading answers. Clear and detailed instructions usually improve response quality and reduce hallucination risks.

For example, instead of asking:

“Explain cybersecurity”

A better prompt would be:

“Explain ransomware attacks with recent examples and prevention methods.”

Context-rich prompts help AI understand:

- user intent

- desired format

- technical depth

- response boundaries

Users can also improve accuracy by asking step-by-step questions instead of combining multiple complex requests into one prompt.

Breaking large problems into smaller tasks often produces more reliable outputs.

Ask AI for Sources and References

Users should ask AI systems to provide:

- citations

- references

- supporting evidence

- official documentation

This makes it easier to evaluate whether the information appears trustworthy.

However, users must still verify the sources manually because AI systems can sometimes generate fake citations or incorrect references.

Evidence-based responses are generally safer than unsupported claims.

When possible, users should compare AI-generated information with trusted external sources instead of relying on the chatbot alone.

Combine AI With Human Judgment

AI works best as an assistant, not as a replacement for human thinking.

Humans still outperform AI in:

- common sense reasoning

- ethical judgment

- emotional understanding

- contextual decision-making

AI systems can generate information quickly, but humans must evaluate whether the output is logical, safe, and accurate.

This is why many organizations use “human-in-the-loop” systems where experts review AI-generated outputs before decisions are finalized.

Critical thinking remains essential when using AI tools for:

- education

- healthcare

- law

- journalism

- cybersecurity

The most effective approach is combining AI efficiency with human oversight and verification.

Can AI Ever Be 100% Accurate?

The short answer is: No.

AI systems are becoming more advanced every year, but perfect accuracy remains unrealistic. Modern AI models still depend on probability, training data, and pattern prediction. Because of this, errors and hallucinations can never be removed completely.

Tools such as ChatGPT and Google Gemini can improve productivity and research, but they still have important limitations.

AI Predicts Patterns Instead of Understanding Truth

AI models do not “know” facts like humans do. They generate answers by predicting the most likely sequence of words based on training patterns.

This approach allows AI to produce fluent and natural responses. But it also creates inaccuracies.

The model may generate answers that sound correct even when the information is false or misleading. AI focuses on language prediction, not true understanding or independent fact verification.

This limitation explains why hallucinations still happen in advanced AI systems.

Training Data Is Never Perfect

AI systems learn from massive datasets collected from websites, books, research papers, forums, and public online content.

The problem is that human-created data already contains:

- misinformation

- bias

- outdated facts

- contradictions

AI models absorb these patterns during training. As a result, they can reproduce the same errors later.

Even with better filtering and moderation, developers cannot completely remove every inaccurate or biased source from large-scale datasets.

The Real World Changes Constantly

Knowledge changes rapidly in areas such as:

- science

- cybersecurity

- medicine

- law

- technology

An AI model trained months earlier may already contain outdated information.

Even AI systems connected to live retrieval tools can still misunderstand or misrepresent recent developments. This creates another major barrier to perfect accuracy.

Humans continuously update their understanding through experience and observation. AI systems depend on external updates and retraining cycles.

AI Still Lacks Human Judgment

Humans use common sense, emotional understanding, reasoning, and real-world experience to evaluate information.

AI does not possess these abilities.

For example, AI models often struggle with:

- sarcasm

- ethical judgment

- emotional nuance

- contextual reasoning

The system processes patterns mathematically instead of understanding situations conceptually.

Because of this, AI can sometimes produce technically fluent answers that fail logical or practical reasoning tests.

Some Questions Do Not Have One Correct Answer

Not every problem has a single objective solution.

Topics involving politics, ethics, philosophy, culture, and law often depend on interpretation and context. AI systems may generate different answers depending on prompt wording, training data, or contextual assumptions.

This makes absolute accuracy impossible in many discussions.

AI Will Improve, But Errors Will Remain

Researchers continue improving AI reliability through:

- better datasets

- fine-tuning

- reasoning models

- Retrieval-Augmented Generation (RAG)

These technologies reduce hallucinations and improve factual grounding. But they cannot guarantee perfect correctness in every situation.

The future of AI is not about creating flawless systems. The real goal is building AI that is more reliable, transparent, and easier for humans to verify safely.

AI Hallucination vs Human Mistakes

Both humans and AI systems make mistakes, but the reasons behind those mistakes are very different. Understanding this difference is important because many people assume AI “thinks” like a human. In reality, AI and humans process information in completely different ways.

Humans make mistakes because of emotion, misunderstanding, memory limitations, stress, or incomplete knowledge. A person may forget information, misread a situation, or make a poor judgment under pressure.

AI systems make mistakes for another reason entirely.

Models such as ChatGPT and Google Gemini generate answers using statistical prediction. They analyze patterns in data and predict the most probable response. The system does not truly understand meaning, truth, or consequences.

This creates a major difference between human reasoning and AI hallucination.

Humans Use Reasoning and Real-World Experience

Humans learn from:

- lived experience

- observation

- emotional understanding

- logical reasoning

A person can often recognize when something “does not make sense” based on common sense and contextual awareness.

For example, humans can question suspicious information, identify contradictions, and evaluate consequences before making decisions.

Even when humans make mistakes, they usually understand the concept of truth and reality.

AI Uses Probability Instead of Understanding

AI models do not possess consciousness, awareness, or human-style reasoning.

Instead, they predict which words are most likely to appear together based on training patterns. This probabilistic process allows AI to generate fluent language, but it can also create hallucinations.

The system may produce:

- fake citations

- invented facts

- incorrect summaries

- misleading explanations

AI often sounds highly confident because the model optimizes for language fluency, not factual certainty.

This is why AI can generate convincing misinformation without recognizing the mistake.

Humans Understand Consequences Better

Humans can evaluate ethical, emotional, and practical consequences in real-world situations.

For example, a doctor understands that incorrect medical advice could seriously harm a patient. A cybersecurity analyst understands the risks of insecure code or false threat detection.

AI systems do not possess this awareness.

An AI chatbot may generate dangerous advice or incorrect information without understanding the potential outcome. The model processes text mathematically rather than morally or emotionally.

This limitation is one reason human oversight remains essential in critical fields.

AI Mistakes Scale Much Faster

Human mistakes usually affect smaller groups of people at a time. AI systems can spread errors at the internet scale within seconds.

For example, one hallucinated AI-generated response can quickly spread across:

- websites

- social media

- news summaries

- automated systems

This scalability makes AI hallucinations potentially more dangerous than ordinary human mistakes.

AI and humans both make errors, but the nature of those errors is fundamentally different. Humans rely on reasoning and lived understanding, while AI relies on probabilistic pattern prediction. This difference explains why AI can sound intelligent and confident while still producing inaccurate information.

Comparison Table: AI Hallucination vs Human Mistakes

| Aspect | Human Mistakes | AI Hallucinations |

| Core Cause | Limited knowledge, stress, emotion, or misunderstanding | Probabilistic prediction and pattern generation |

| Understanding | Uses real-world understanding and reasoning | Lacks true understanding and awareness |

| Decision Process | Based on logic, experience, and judgment | Based on statistical language patterns |

| Error Type | Misjudgment, memory errors, or incorrect reasoning | Fabricated facts, fake citations, or misleading outputs |

| Context Awareness | Understands emotional and situational context | Often struggles with complex or ambiguous context |

| Confidence Level | May show uncertainty when unsure | Often gives confident but incorrect answers |

| Ability to Verify Truth | Can question and reassess information | Cannot independently verify factual truth reliably |

| Ethical Awareness | Understands consequences and responsibility | Does not understand moral or real-world consequences |

| Learning Method | Learns through experience and observation | Learns from training datasets and pattern analysis |

| Scale of Impact | Usually limited to individuals or small groups | Can spread misinformation instantly at an internet scale |

Which AI Models Hallucinate the Most?

AI hallucinations affect nearly every major large language model in 2026. No mainstream AI chatbot is completely free from factual errors, fabricated content, or misleading outputs.

Models such as ChatGPT, Google Gemini, Claude, and Microsoft Copilot all attempt to reduce hallucinations through different technical approaches. But each system still faces limitations involving reasoning, context handling, and factual grounding.

The important question is not simply “Which AI hallucinates the most?” The better question is:

Why do all large language models still hallucinate at all?

The answer lies in how these systems work.

All modern AI chatbots generate responses using probabilistic prediction. They analyze patterns in language and predict the most likely response based on training data and context. Because they do not truly understand truth or reality, hallucinations remain possible in every system.

However, some AI models perform better in certain situations because of differences in architecture, retrieval systems, safety training, and context management.

ChatGPT

ChatGPT is widely used for writing, coding, education, and research assistance. It generally performs well in structured explanations and conversational reasoning.

OpenAI has improved hallucination reduction through:

- reinforcement learning

- retrieval systems

- better alignment techniques

- stronger instruction-following models

However, ChatGPT can still:

- invent citations

- generate outdated information

- produce incorrect technical explanations

- hallucinate during complex reasoning tasks

The model sometimes prioritizes fluent responses over factual precision.

Google Gemini

Google Gemini benefits from deep integration with search and retrieval infrastructure. This can improve access to updated information and current events.

Gemini often performs well when:

- retrieving recent information

- summarizing web content

- handling multimodal tasks

But hallucinations can still occur, especially in:

- nuanced reasoning

- ambiguous prompts

- complex factual interpretation

Like other LLMs, Gemini may generate confident but misleading outputs if context becomes unclear.

Claude

Claude focuses heavily on AI safety and alignment research. Models from Anthropic are often designed to behave more cautiously during uncertain situations.

Claude may:

- refuse risky responses more often

- acknowledge uncertainty more clearly

- provide safer conversational behavior

This cautious approach can reduce some hallucination risks, but it does not eliminate factual errors completely.

Claude can still struggle with:

- fabricated details

- reasoning inconsistencies

- long-context confusion

Microsoft Copilot

Microsoft Copilot integrates AI directly into productivity tools, coding workflows, and enterprise systems.

Copilot benefits from:

- enterprise data integration

- contextual productivity workflows

- real-time assistance features

In coding tasks, retrieval-based suggestions can improve factual grounding. However, AI-generated code may still contain:

- security flaws

- incorrect logic

- outdated syntax

- hallucinated functions

Enterprise integration improves usefulness, but human verification remains necessary.

Why Retrieval Systems Matter

One major difference between AI models involves retrieval capability.

Some systems rely more heavily on Retrieval-Augmented Generation (RAG), which allows the AI to access external information sources in real time before generating responses.

Better retrieval systems can help reduce:

- outdated answers

- fabricated facts

- unsupported claims

However, retrieval alone does not fully solve hallucinations. The AI can still misinterpret retrieved information or combine it incorrectly.

Safety and Alignment Approaches Differ

AI companies also use different alignment and safety strategies.

Some models prioritize:

- cautious responses

- refusal systems

- uncertainty handling

- harmful content prevention

Others focus more heavily on:

- creativity

- conversational fluency

- broad instruction following

These design choices affect how hallucinations appear in different AI systems.

For example, one model may hallucinate less often but refuse more answers. Another may produce more detailed responses but increase factual risks.

Which AI Is Most Accurate?

There is currently no universally perfect AI chatbot.

Accuracy depends heavily on:

- the type of question

- prompt quality

- retrieval access

- reasoning complexity

- domain specialization

In some cases, one model may outperform others in coding or research tasks. In other situations, another model may handle context or factual retrieval better.

The most important takeaway is simple:

All modern large language models can hallucinate, and users should verify important information regardless of which AI platform they use.

AI Hallucination in Different Industries

AI hallucinations affect far more than casual chatbot conversations. Many industries now use AI systems for research, automation, analysis, and decision-making. Because of this, inaccurate AI outputs can create serious professional and operational risks.

The impact varies across industries, but the core problem remains the same: AI can generate convincing information that is not always correct.

Healthcare

Healthcare requires high factual accuracy, which makes AI hallucinations especially dangerous.

Medical AI tools may assist with:

- symptom analysis

- clinical documentation

- patient communication

- research summarization

However, an AI system can still generate:

- incorrect diagnoses

- unsafe treatment suggestions

- false drug information

- fabricated medical references

For example, an AI chatbot may misunderstand symptoms and recommend inappropriate actions. Inaccurate healthcare information can directly affect patient safety and medical decision-making.

This is why doctors and healthcare professionals cannot rely entirely on AI-generated medical advice.

Cybersecurity

AI is increasingly used in cybersecurity for:

- threat analysis

- malware detection

- code generation

- incident response

But hallucinations in cybersecurity can create serious vulnerabilities.

An AI model may:

- generate insecure code

- recommend unsafe configurations

- misclassify threats

- provide incorrect remediation steps

For example, an AI coding assistant could suggest outdated authentication methods that expose systems to attacks.

Cybersecurity requires precision and verification. Even small AI-generated errors can create major security risks.

Education

Students and educators increasingly use AI tools for:

- research

- essay writing

- tutoring

- summarization

AI can improve learning efficiency, but hallucinations create misinformation risks in academic environments.

A chatbot may:

- invent references

- provide incorrect explanations

- generate false historical claims

- simplify topics inaccurately

Students who rely entirely on AI without verification may unknowingly submit inaccurate information in assignments or research work.

This issue also affects critical thinking. Overdependence on AI-generated answers can reduce independent analysis and source evaluation skills.

Law

The legal industry has already seen real-world examples of AI hallucinations causing professional problems.

AI systems used for:

- legal research

- contract analysis

- document drafting

- case summarization

can sometimes generate fabricated legal citations or incorrect interpretations.

In some reported cases, lawyers submitted AI-generated references to court that cited non-existent legal cases.

Because legal systems require precise factual and procedural accuracy, hallucinations create major ethical and professional risks.

Legal professionals must therefore verify every AI-generated reference carefully.

Journalism

News organizations increasingly experiment with AI for:

- article summaries

- headline generation

- content drafting

- translation

But journalism depends heavily on factual accuracy and context.

AI hallucinations can:

- distort news events

- invent quotes

- misrepresent statistics

- remove critical context

A small factual error in an AI-generated news summary can spread misinformation rapidly online.

This becomes especially dangerous during:

- Elections

- Financial reporting

- Geopolitical conflicts

- Public emergencies

Journalists still need human editorial review to ensure credibility and accuracy.

AI hallucinations affect nearly every industry adopting generative AI technologies. As AI systems become more integrated into professional workflows, the importance of verification, oversight, and responsible deployment continues to grow.

How Companies Are Reducing AI Hallucinations

AI companies understand that hallucinations remain one of the biggest challenges in modern generative AI systems. Because of this, researchers and developers are building new techniques to improve factual accuracy, reasoning quality, and reliability.

Major organizations such as OpenAI, Google, Microsoft, and Anthropic are actively investing in safer and more trustworthy AI systems.

The goal is not only to generate fluent language but also to reduce misinformation and improve factual grounding.

Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation, commonly called RAG, has become one of the most important techniques for reducing hallucinations.

Traditional AI models rely mainly on training data learned earlier. RAG systems work differently. They retrieve information from external sources in real time before generating a response.

This approach helps AI systems:

- Access updated information

- Improve factual grounding

- Reduce fabricated answers

- Reference trusted documents

For example, enterprise AI assistants can retrieve information directly from internal company databases, documentation, or verified knowledge systems before answering user questions.

RAG does not eliminate hallucinations completely, but it significantly improves reliability compared to models that depend only on static training data.

Grounding Techniques

Grounding helps AI systems connect responses to verified sources or factual context.

Instead of generating free-form answers entirely from prediction patterns, grounded AI systems attempt to anchor outputs to:

- Databases

- Trusted documents

- Search results

- Enterprise knowledge repositories

This process reduces the chance of fabricated information appearing in responses.

Grounding is especially important in industries such as:

- Healthcare

- Finance

- Law

- Cybersecurity

where factual accuracy matters greatly.

Many modern AI systems now combine retrieval and grounding techniques to improve trustworthiness.

Alignment Research

Alignment research focuses on making AI systems behave more safely and reliably according to human goals and expectations.

Researchers want AI models to:

- Avoid misleading outputs

- Admit uncertainty honestly

- Follow instructions safely

- Reduce harmful hallucinations

Companies such as Anthropic and OpenAI continue investing heavily in alignment research.

Modern AI systems increasingly attempt to recognize uncertain situations instead of confidently inventing information.

This area remains one of the most important long-term challenges in AI development.

Human-in-the-Loop AI

Many organizations now use “human-in-the-loop” systems to reduce AI risks.

In this approach, humans review, verify, or approve AI-generated outputs before critical decisions are made.

Human oversight remains important because AI systems still struggle with:

- Ambiguity

- Ethical judgment

- Factual verification

- Real-world reasoning

For example:

- Doctors review AI-generated medical suggestions

- Cybersecurity analysts verify AI threat reports

- Editors check AI-generated news summaries

- Lawyers validate AI-generated legal research

Human review helps catch hallucinations before they create serious consequences.

Model Fine-Tuning

Fine-tuning allows developers to train AI systems on specialized and higher-quality datasets.

This process improves performance in specific domains such as:

- Medicine

- Coding

- Finance

- Customer support

Fine-tuned models can better follow instructions, reduce irrelevant outputs, and improve contextual accuracy.

For example, an AI assistant trained specifically on verified cybersecurity datasets will usually produce more reliable security guidance than a general-purpose chatbot.

However, fine-tuning still cannot guarantee perfect factual accuracy. The model may still hallucinate under difficult or ambiguous conditions.

AI hallucination reduction is now one of the central goals of modern AI research. Companies are combining retrieval systems, grounding methods, alignment research, human oversight, and specialized training to build safer and more reliable AI systems.

Although hallucinations still exist in 2026, these improvements are gradually making AI outputs more trustworthy across real-world applications.

Future of AI Accuracy (2026 and Beyond)

AI systems are improving rapidly, and future models will likely become more accurate, reliable, and context-aware. Researchers and technology companies continue investing heavily in reducing hallucinations and improving factual consistency.

But the future of AI accuracy will depend on more than larger models. The industry is now focusing on reasoning quality, alignment, safety, and real-world reliability.

Companies such as OpenAI, Google, and Anthropic are actively developing systems that generate more trustworthy responses while reducing misinformation risks.

Smarter AI Models With Better Reasoning

Early AI systems mainly focused on generating fluent language. Modern models are shifting toward stronger reasoning and contextual understanding.

Future AI systems will likely improve in areas such as:

- Multi-step reasoning

- Fact verification

- Long-context handling

- Real-time information retrieval

This shift is important because many hallucinations happen when AI models prioritize language fluency over logical accuracy.

New architectures may also improve how AI handles memory, context retention, and complex instructions. This could reduce problems caused by context confusion and fragmented reasoning.

AI coding assistants, research tools, and enterprise chatbots are already becoming more specialized and task-aware in 2026.

Alignment Research Is Becoming a Major Priority

Alignment research focuses on making AI systems behave more safely, accurately, and consistently with human goals.

Researchers want AI systems to:

- Avoid misleading outputs

- Admit uncertainty honestly

- Follow human instructions safely

- Reduce harmful hallucinations

This area has become one of the most important fields in modern AI development.

For example, developers now train models to respond more cautiously when information is unclear or unverifiable. Instead of inventing answers, future systems may increasingly acknowledge uncertainty or request clarification.

Alignment research also helps reduce:

- Bias

- Unsafe outputs

- Deceptive behavior

- Misinformation risks

Organizations such as OpenAI and Anthropic continue publishing research focused on safer and more reliable AI systems.

Retrieval Systems Will Improve Factual Accuracy

One major improvement involves Retrieval-Augmented Generation (RAG) systems.

Instead of relying only on training data, future AI models increasingly retrieve information from trusted external sources in real time. This allows the system to access updated knowledge before generating responses.

As retrieval systems improve, AI tools may become better at:

- Citing sources accurately

- Reducing fabricated information

- Handling current events

- Improving enterprise reliability

This approach is already changing how businesses deploy AI for research, support, and knowledge management.

Human Oversight Will Still Matter

Even with major advancements, human verification will remain important.

AI systems may become more accurate, but they will still face limitations involving:

- Ambiguity

- Ethics

- Real-world judgment

- Rapidly changing information

Critical decisions in medicine, law, finance, cybersecurity, and scientific research will still require human expertise and accountability.

The future of AI accuracy is therefore not about replacing human judgment completely. It is about building systems that work more safely alongside humans while reducing hallucinations and factual errors over time.

FAQs on Why AI Gives Wrong Answers

Why does AI hallucinate?

AI hallucinates because it predicts language patterns instead of truly understanding facts. Models such as ChatGPT and Google Gemini generate responses using probability and training data patterns. When information is unclear or missing, the AI may create convincing but inaccurate answers.

Hallucinations also happen because of:

- Poor training data

- Context confusion

- Weak reasoning

- Outdated information

The model tries to complete patterns fluently, even when the answer is incorrect.

Can AI be trusted?

AI can be useful, but users should not trust it blindly.

Modern AI systems perform well for:

- Brainstorming

- Summarization

- Coding assistance

- Content generation

However, AI can still produce false or misleading information confidently. Important outputs should always be verified using trusted sources and human judgment.

AI works best as an assistant, not as a perfect authority.

How to detect AI mistakes?

Users can detect AI mistakes by checking responses carefully and verifying important claims.

Warning signs often include:

- Fake citations

- Vague explanations

- Contradictory answers

- Overly confident claims without evidence

Cross-checking with official sources, research papers, or expert documentation helps identify hallucinations quickly.

Asking AI to explain reasoning step-by-step can also expose logical errors or inconsistencies.

Is AI getting better?

Yes. AI systems are improving rapidly in reasoning, context handling, and factual accuracy.

Companies such as OpenAI and Google continue developing better models with stronger safety and retrieval systems.

Technologies such as Retrieval-Augmented Generation (RAG), fine-tuning, and alignment research are helping reduce hallucinations and improve reliability.

Even so, no AI system in 2026 is completely error-free. Human verification still remains important for critical tasks.

Conclusion

AI systems have transformed how people search for information, write content, analyze data, and solve problems. Tools such as ChatGPT and Google Gemini can generate fast and highly detailed responses, but they still make mistakes.

AI gives wrong answers because it predicts patterns instead of truly understanding reality. Problems such as hallucinations, biased training data, weak reasoning, and context misinterpretation can lead to inaccurate outputs that sound convincing.

These errors create real risks in areas like medicine, cybersecurity, research, business, and education. A confident AI response does not always mean the information is correct.

At the same time, AI accuracy is improving quickly. Researchers are developing better reasoning systems, safer alignment methods, and retrieval technologies that reduce hallucinations and improve factual reliability.

Still, human judgment remains essential.

The best approach in 2026 is not blindly trusting AI or completely rejecting it. Users should treat AI as a powerful assistant that requires verification, critical thinking, and responsible use.

AI can improve productivity and learning significantly, but accuracy still depends on careful human oversight.

Last Updated: May 13, 2026